Gensler released a study last week. Sixteen thousand office workers across sixteen countries. Clean data, credible methodology, and a conclusion that’s hard to argue with on its face: employees who use AI the most are also the most connected to their colleagues.

The more interesting exercise wasn’t reading the study. It was what happened when I asked two AI systems to interpret it—and then compared the results.

Both readings were credible. Neither was complete. And the gap between them reveals something more important than anything in the original research.

The First Read: Sharp, Fast, and Commercially Aware

The initial analysis came back quickly. It flagged the right things.

Gensler is a global architecture and design firm. Their business depends on clients investing in physical workplaces. So when their research concludes that “the office becomes more important in the AI era,” you notice the alignment between finding and fee.

The first read also correctly identified the selection effect buried in the methodology. The study’s “AI power users”—the 30 percent of workers who use AI regularly in both work and personal life—are not a random cross-section of the workforce. They are, by definition, people who experiment early, tolerate ambiguity, and seek leverage. Those traits existed before AI. The tools simply amplify them.

AI power users are not created by AI. They are revealed by it.

So when the data shows these workers are more collaborative, more engaged, and more connected to their teams, correlation is doing a lot of work. They would likely score higher on those measures in any era. The first read was right to flag this, and right to suggest you treat the piece as a brief rather than a study.

But it stopped there. And that’s where the second read began.

The Second Read: Slower, Structural, and Forward-Looking

The second analysis accepted the first read’s skepticism and then moved past it into territory the article never reached.

The most important point: AI doesn’t simply free up time for more human activity. It reallocates cognitive load. When AI compresses execution, what expands in its place isn’t leisure—it’s the burden of verification, the weight of more options, and the decision fatigue that comes from having too many “good enough” answers.

This is the part the Gensler piece—and the first analysis—largely skipped. The article frames freed-up time as automatically redirected toward learning and collaboration. In practice, three other things often happen: work expands to fill the space, quality control burden increases, and the volume of decisions rises faster than the capacity to make good ones.

The second read also tightened the “office matters more” claim into something more precise. The real shift isn’t that offices become more important. It’s that the mismatch between space and task becomes more expensive. AI creates two distinct modes of work: high-focus, tool-driven work that often improves in solitude, and high-ambiguity, human-alignment work that benefits from proximity. Collapsing those into a single conclusion about office attendance misses the operational reality.

It’s not that the office matters more. It’s that putting the wrong people in the wrong space—for the wrong kind of work—now costs you more than it used to.

What Changed Between the Two Reads

What Changed Between the Two Reads

The second read didn’t contradict the first. It extended it.

The first moved from observation to skepticism. The second moved from skepticism to implication. Each pass found something the previous one missed, which is itself a useful demonstration: interpretation compounds. The signal in a piece of research doesn’t fully emerge on first contact.

What deepened most was the structural consequence—the part both analyses were circling without quite landing. AI isn’t just changing how people work. It’s changing which people matter most in a given workflow. And that’s a different, harder conversation than anything in the Gensler article.

What Both Reads Still Missed

Honesty requires naming this.

The Gensler survey draws from sixteen countries—Japan, Germany, the United States, and thirteen others. Work culture, spatial norms, and attitudes toward collaboration vary enormously across that range. Aggregating them into a single behavioral profile of “AI power users” papers over variation that would matter significantly if you’re making decisions about a specific market or workforce.

More fundamentally, neither read questioned the premise. The Gensler article defines its research question as: what do power users tell us about the future of work? But that framing assumes AI adoption is the relevant variable—when it may be that the traits driving AI adoption are the variable, and AI is just the current expression of them. That’s a different question, and it leads to different conclusions about what organizations should actually do.

The Real Shift: Who Becomes More Valuable

Here is what the Gensler study is really pointing at, even if it doesn’t say it directly.

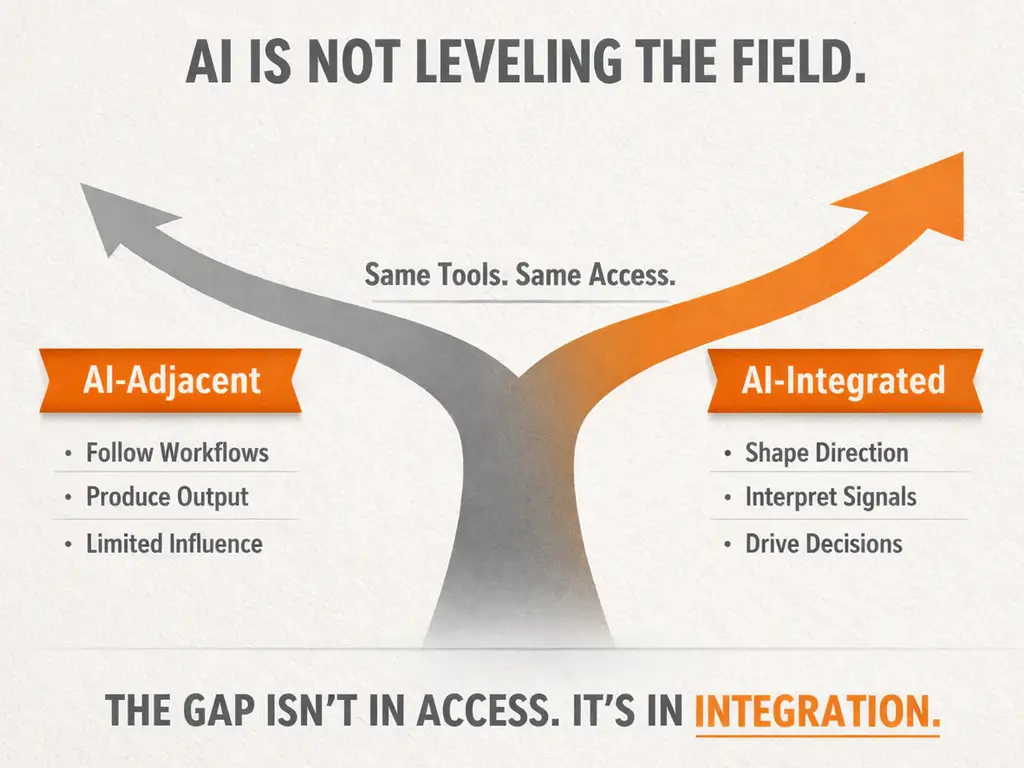

The workforce is bifurcating. Not by industry, age, or job title—though those correlate. By integration ability.

AI-Integrated Operators

Use AI as part of how they think. They iterate quickly, combine sources, and drive decisions. Their output shapes the people around them, not just their own work.

AI-Adjacent Workers

Use tools occasionally or not at all. They follow existing workflows and produce outputs. In an increasing number of contexts, they are becoming interchangeable with the tool itself.

The difference is not productivity. It is influence.

Where This Shows Up

For manufacturers, associations, and design professionals, this is not theoretical. It is already visible in the work.

In specification work:

the difference between expanding interpretive range and filling templates faster.

In manufacturing strategy:

anticipating market signals versus accelerating existing output.

In design and engineering:

asking better questions versus generating more options.

In leadership:

shaping decisions versus reacting to them.

The gap is not between firms that use AI and those that don’t. It’s between firms that integrate AI into thinking and those that bolt it onto execution.

AI doesn’t level the playing field. It tilts it. And it will not be self-correcting.

The Cleanest Way to Frame It

Gensler’s data is real. Their conclusions are commercially aligned but not fabricated. Read their piece as a credible dataset wrapped in a strategic argument—useful signal, self-interested framing.

The organizations that benefit from AI will not simply be the ones that use it the most. They will be the ones that integrate it into how they think, align it with how they decide, and recognize—early—where the real gap is forming.

That gap is already here. The question is not whether it will widen. It’s which side of it you’ll be on.

AI doesn’t make work more human. It exposes who is capable of doing human work at a higher level.

Thanks for reading. Let us hear from you!